Neural Technique for Language Translation

Keywords:

Neural Machine Translation, Keras, Recurrent Neural Network, LSTM, Encoder and DecoderAbstract

Objectives: To develop a Neural Machine Translator which can be integrated to the chatting applications which will be helpful for the users who are convenient with their regional languages.

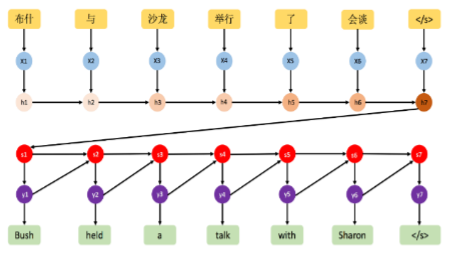

Methods: Neural machine translation is an approach in machine translation which uses an artificial neural network to predict the likelihood of a sequence of words. It is typically modeling entire sentences in a single integrated model. NMT provides more accurate translation by taking into account the context in which a word is used, rather than just translating each individual word on its own.

Findings: We used LSTM to build our model and we were able to get the Hindi sentences for the corresponding English sentences which contains the words count less than are equal to 5 accurately. We were getting translation for sentences more than 5 words also but not all. Like if we test for 100 sentences having more than 5 words, we got almost 75 to 80 sentences accurately.

Downloads

References

Aqlan, F., Fan, X., Alqwbani, A., & Al-Mansoub, A. (2019). Arabic –Chinese neural machine translation: Romanized Arabic as subword unit for Arabic - sourced translation. IEEE Access, 7, 133122-13313chrome5 [10.1109/ACCESS.2019.2941161].

Revanuru, Karthik, Kaushik Turlapaty, and Shrisha Rao. "Neural machine translation of indian languages." Proceedings of the 10th Annual ACM India Compute Conference. 2017 [10.1145/3140107.3140111].

Chauhan, T., and S. Sonawane. “The Contemplation of Explainable Artificial Intelligence Techniques: Model Interpretation Using Explainable AI”. International Journal on Recent and Innovation Trends in Computing and Communication, vol. 10, no. 4, Apr. 2022, pp. 65-71, doi:10.17762/ijritcc.v10i4.5538.

Gehring, J., Auli, M., Grangier, D., Yarats, D., & Dauphin, Y. N. (2017). Convolutional sequence to sequence learning. arXiv preprint arXiv: 1705.03122 [1705.03122].

Clinchant, S., Jung, K.W. and Nikoulina, V., 2019. On the use of BERT for neural machine translation. arXiv preprint arXiv:1909.12744 [1909.12744].

Luong, Minh-Thang, Hieu Pham, and Christopher D. Manning. "Effective approaches to attention-based neural machine translation." arXiv preprint arXiv:1508.04025 (2015) [1508.04025].

Snelleman, Emanuel. "Decoding neural machine translation using gradient descent." Master's thesis, 2016.

Gain, Baban, Dibyanayan Bandyopadhyay, and Asif Ekbal. "IITP at WAT 2021: System description for English-Hindi Multimodal Translation Task." arXiv preprint arXiv:2107.01656 (2021) [2107.01656].

Verma, C., A. Singh, S. Seal, V. Singh, and I. Mathur. "Hindi-English neural machine translation using attention model." International Journal of Scientific and Technology Research 8, no. 11 (2019): 2710-2714.

Ghazaly, N. M. . (2022). Data Catalogue Approaches, Implementation and Adoption: A Study of Purpose of Data Catalogue. International Journal on Future Revolution in Computer Science &Amp; Communication Engineering, 8(1), 01–04. https://doi.org/10.17762/ijfrcsce.v8i1.2063

Wu, Yonghui, Mike Schuster, Zhifeng Chen, Quoc V. Le, Mohammad Norouzi, Wolfgang Macherey, Maxim Krikun et al. "Google's neural machine translation system: Bridging the gap between human and machine translation." arXiv preprint arXiv:1609.08144 (2016) [1609.08144].

Bahdanau, Dzmitry, Kyunghyun Cho, and Yoshua Bengio. "Neural machine translation by jointly learning to align and translate." arXiv preprint arXiv:1409.0473 (2014) [1409.0473].

Shah, Parth, and Vishvajit Bakrola. "Neural Machine Translation System of Indic Languages-An Attention based Approach." In 2019 Second International Conference on Advanced Computational and Communication Paradigms (ICACCP), pp. 1-5. IEEE, 2019 [10.1109/ICACCP.2019.8882969].

Zhang, Jingyi, Masao Utiyama, Eiichro Sumita, Graham Neubig, and Satoshi Nakamura. "Guiding neural machine translation with retrieved translation pieces." arXiv preprint arXiv:1804.02559 (2018) [1804.02559].

Chawla, A. (2022). Phishing website analysis and detection using Machine Learning. International Journal of Intelligent Systems and Applications in Engineering, 10(1), 10–16. https://doi.org/10.18201/ijisae.2022.262

Luong, Minh-Thang, and Christopher D. Manning. "Stanford neural machine translation systems for spoken language domains." In Proceedings of the international workshop on spoken language translation, no. IWSLT. 2015.

Premjith, B., M. Anand Kumar, and K. P. Soman. "Neural Machine Translation System for English to Indian Language Translation Using MTIL Parallel Corpus." Journal of Intelligent Systems 28, no. 3 (2019): 387-398 [10.1515/jisys-2019-2510].

Datta, Debajit, Preetha Evangeline David, Dhruv Mittal, and Anukriti Jain. "Neural machine translation using recurrent neural network." International Journal of Engineering and Advanced Technology 9, no. 4 (2020): 1395-1400.

Using a Keras Long Short-Term Memory (LSTM) Model to Predict Stock Prices by Derrick Mwiti, Data Analyst. ( https://www.kdnuggets.com/2018/11/keras-long-short-term-memory-lstm-model-predict-stock-prices.html )

CNN computation over RNN by FaceBook ( https://engineering.fb.com/2017/05/09/ml-applications/a-novel-approach-to-neural-machine-translation/ )

Beam search and Attention Mechanism ( https://hackernoon.com/beam-search-attention-for-text-summarization-made-easy-tutorial-5-3b7186df7086 )

LSTM ( http://colah.github.io/posts/2015-08-Understanding-LSTMs/ )

Keras ( https://blog.keras.io/a-ten-minute-introduction-to-sequence-to-sequence-learning-in-keras.html )

M. J. Traum, J. Fiorentine. (2021). Rapid Evaluation On-Line Assessment of Student Learning Gains for Just-In-Time Course Modification. Journal of Online Engineering Education, 12(1), 06–13. Retrieved from http://onlineengineeringeducation.com/index.php/joee/article/view/45

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.