Target User Specific Q-Learning (TUQL) Personalized Product Recommendation

Keywords:

Product recommendation, recurrent neural network, sentiment analysisAbstract

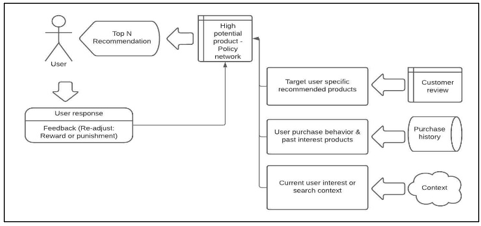

Recommendation system plays important role to predict the relevant product from large number of available products. The conventional recommendation systems focus on the item user interaction and predict the products based on the similar user interest or item purchased history. Later recommendation is further enhanced by context aware and sequential based recommendation; which predicts based on current search and browse session information respectively. In the recommendation; current important challenge is addressing both accuracy and Serendipity; predicting the interested unknown products. In this paper, we propose hybrid recommendation framework to overcome this challenge, target user specific Q learning reinforcement (TUQL) approach, predicts Top N recommendation effectively based on the current context, user past purchase behavior, temporal data and consider real target end user. The experimental results of the proposed recommendation system show better performance than the existing product recommendation systems in terms of prediction accuracy on relevant products for the target users and lesser computation time.

Downloads

References

Fatima Rodrigues and Bruno Ferreria,”Product recommendation based on shared customer behavior”,Conference on enterprise information system,Procedia computer science, 2016, pp 136-146.

Dezhuang Miaoa, Xuesong Lua, Qiwen Donga, Daocheng Honga,” Humming-Query and Reinforcement-Learning based Modeling Approach for Personalized Music Recommendation”, Procedia Computer Science 176 ,pp 2154–2163, 2020.

Michael Reisener, Christian Dolle, Christopher Dierkes and Merle Hendrikje Jank,”Applying Supervised and Reinforcement Learning to Design Product Portfolios in Accordance with Corporate Goals”, Procedia CIRP 91, 30th CIRP Design 2020, pp. 127–133.

Joao Carapuc, Rui Neves, Nuno Horta,"Reinforcement Learning Applied to Forex Trading",Applied Soft Computing Journal,2018.

Elena Krasheninnikova, Javier Garcia,Roberto Maestre and Fernando Fernandez,” Reinforcement learning for pricing strategy optimization in the insurance industry”, Engineering Applications of Artificial Intelligence 80,2019, pp. 8-19.

Shenggong Ji, Zhaoyuan Wang, Tianrui Li and Yu Zheng,” Spatio-temporal feature fusion for dynamic taxi route recommendation via deep reinforcement learning”, Knowledge-Based Systems 205, 2020.

Sydney Chinchanachokchai, Pipat Thontirawong and Punjaporn Chinchanachokchai,"A tale of two recommender systems: The moderating role of consumer expertise on artificial intelligence based product recommendations", Journal of Retailing and Consumer Services 61,2021.

Ming-Chuan Chiu, Jih-Hung Huang, Saraj Gupta and Gulsen Akman,"Developing a personalized recommendation system in a smart product service system based on unsupervised learning model", Computers in Industry 128, 2021.

Sang Hyun Choi and Byeong Seok Ahn,"Rank order-based recommendation approach for multiple featured products",Expert Systems with Applications 38,2011, pp. 7081-7087.

Stefano Ferretti , Silvia Mirri , Catia Prandi , Paola Salomoni , Automatic Web Content Personalization Through Reinforcement Learning, The Journal of Systems & Software, 2016.

Sandra Garcia Esparza, Michael P. O'Mahony and Barry Smyth, "Mining the real-time web: A novel approach to product recommendation", Knowledge-Based Systems 29, 2012, pp. 3–11.

Gang Ke, Hong-Le Du and Yeh-Cheng Chen,"Cross-platform dynamic goods recommendation system based on reinforcement learning and social networks",Applied Soft Computing Journal 104,2021.

Chin-Hui Lai , Shin-Jye Lee and Hung-Ling Huang ,”A Social Recommendation Method Based on the Integration of Social Relationship and Product Popularity”, International Journal of Human-Computer Studies,2018.

Gyudong Lee, Jaeeun Lee and Clive Sanford,"The roles of self-concept clarity and psychological reactance in compliance with product and service recommendations", Computers in Human Behavior 26, 2010, pp. 1481–1487.

Feng Liu, Ruiming Tang, Huifeng Guo, Xutao Li, Yunming Ye and Xiuqiang He,"Top-aware reinforcement learning based recommendation",Neurocomputing 417, 2020, pp. 255–269.

Shan Liu, Hai Jiang, Shuiping Chen, Jing Ye, Renqing He and Zhizhao Sun,"Integrating Dijkstra’s algorithm into deep inverse reinforcement learning for food delivery route planning",Transportation Research Part E 142, 2020.

Andre Marchand and Paul Marx,"Automated Product Recommendations with Preference-BasedExplanations",Journal of Retailing 96, 2020, pp. 328–343.

JaeKwan Park, TaekKyu Kim and SeungHwan Seong,"Providing support to operators for monitoring safety functions using reinforcement learning",Progress in Nuclear Energy 118, 2020.

Lingyun Qiu and IzakBenbasat,"A study of demographic embodiments of product recommendation agents in electronic commerce",Int. J.Human-ComputerStudies 68, 2010, pp. 669–688.

Manuela Ruiz-Montiel,Javier Boned, Juan Gavilanes, Eduardo Jimenez, Lawrence Mandow and Jose-Luis Perez-de-la-Cruz," Design with shape grammars and reinforcement learning",Advanced Engineering Informatics 27, 2013, pp. 230–245.

Karthik R.V, Sannasi Ganapathy and Arputharaj Kannan, “A Recommendation System for Online Purchase Using Feature and Product Ranking”, Eleventh International Conference on Contemporary Computing (IC3), pp. 1-6, 2018.

R. V. Karthik and Sannasi Ganapathy,"A fuzzy recommendation system for predicting the customers interests using sentiment analysis and ontology in e-commerce", Applied Soft Computing Journal, Vol. 108, pp.1-18, 2021.

R. V. Karthik and Sannasi Ganapathy, "Online Product Recommendation System Using Multi Scenario Demographic Hybrid (MDH) Approach", IFIP International Federation for Information Processing, ICCIDS 2020, IFIP AICT, Vol. 578, pp. 1–13, 2020.

Yang Zhang, Daniel (Yue) Zhang, Nathan Vance, Dong Wang, "An online reinforcement learning approach to quality-cost-aware task allocation for multi-attribute social sensing",Pervasive and Mobile Computing 60, 2019.

Jianmo Ni, Jiacheng Li, Julian McAuley, "Empirical Methods in Natural Language Processing (EMNLP)", 2019. https://nijianmo.github.io/amazon/index.html.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.