Sarcasm Identification in Reddit Online Discussion Forum Using Fully Contextual CASCADE

Keywords:

BERT, BiLSTM, CASCADE, CNN, SARC, Word2VecAbstract

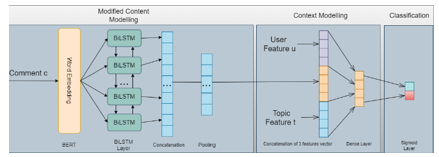

Sarcasm is a form of figurative language that cannot be easily detected using simple sentiment analysis because of contradictory nature between its literal and true meaning. Sarcasm detection research is conducted using various methods and algorithm, one of those method is ContextuAl SarCasm Detector (CASCADE) which implements content and contextual features to detect sarcasm from comment. The model uses CNN to extract content-based features from the comments, Word2Vec and CNN to extract contextual features for user and discourse embeddings. However, content feature extraction can be further improved by implementing transformer since it can understand connection between words better thus improving contextual knowledge of the comments for better content-based modelling. This study proposes an enhancement for CASCADE as baseline model, replacing its CNN based method for content modelling by using BERT-BiLSTM method to create a better content-based modelling and concatenating it with CASCADE’s user and discourse embeddings. The proposed model will then be used to detect sarcasm from REDDIT online discussion forum corpus namely SARC, a dataset for sarcasm research purpose. The proposed method gives a slight increase in accuracy and F1-score compared to the previous research and proven to perform best by training with balanced dataset. This research is still in early stage, and it may get better from hyperparameter tuning and cleaner method, for now it provides a significant increase in Accuracy and F1-Score.

Downloads

References

R. Filik, A. Ţurcan, C. Ralph-Nearman, and A. Pitiot, “What is the difference between irony and sarcasm? An fMRI study,” Cortex, vol. 115, pp. 112–122, Jun. 2019, doi: 10.1016/j.cortex.2019.01.025.

P. Carvalho, L. Sarmento, M. J. Silva, and E. de Oliveira, “Clues for detecting irony in user-generated contents,” in Proceeding of the 1st international CIKM workshop on Topic-sentiment analysis for mass opinion - TSA ’09, 2009, p. 53. doi: 10.1145/1651461.1651471.

A. Ghosh and T. Veale, “Magnets for Sarcasm: Making Sarcasm Detection Timely, Contextual and Very Personal,” in Proceedings of the 2017 Conference on Empirical Methods in Natural Language Processing, 2017, pp. 482–491. doi: 10.18653/v1/D17-1050.

B. C. Wallace, D. K. Choe, L. Kertz, and E. Charniak, “Humans Require Context to Infer Ironic Intent (so Computers Probably do, too),” in Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), 2014, pp. 512–516. doi: 10.3115/v1/P14-2084.

D. Hazarika, S. Poria, S. Gorantla, E. Cambria, R. Zimmermann, and R. Mihalcea, “CASCADE: Contextual Sarcasm Detection in Online Discussion Forums,” May 2018, [Online]. Available: http://arxiv.org/abs/1805.06413

M. Khodak, N. Saunshi, and K. Vodrahalli, “A Large Self-Annotated Corpus for Sarcasm,” Apr. 2017, [Online]. Available: http://arxiv.org/abs/1704.05579

J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” Oct. 2018, [Online]. Available: http://arxiv.org/abs/1810.04805

Maojin. Jiang, ACM Digital Library., Association for Computing Machinery. Special Interest Group on Information Retrieval., and H. and Web. Association for Computing Machinery. Special Interest Group on Hypertext, Proceedings of the 1st international CIKM workshop on Topic-sentiment analysis for mass opinion. ACM, 2009.

R. González-Ibáñez, S. Muresan, and N. Wacholder, “Identifying Sarcasm in Twitter: A Closer Look,” in Proceedings of the 49th Annual Meeting of the Association for Computational Linguistics: Human Language Technologies, Jun. 2011, pp. 581–586. [Online]. Available: https://aclanthology.org/P11-2102

O. Tsur, D. Davidov, and A. Rappoport, “ICWSM - A Great Catchy Name: Semi-Supervised Recognition of Sarcastic Sentences in Online Product Reviews,” in Proceedings of the Fourth International Conference on Weblogs and Social Media, ICWSM 2010, Washington, DC, USA, May 23-26, 2010, 2010. [Online]. Available: http://www.aaai.org/ocs/index.php/ICWSM/ICWSM10/paper/view/1495

D. Davidov, O. Tsur, and A. Rappoport, “Semi-Supervised Recognition of Sarcastic Sentences in Twitter and Amazon,” Association for Computational Linguistics, 2010. [Online]. Available: http://tinysong.com/cO6i

E. Riloff, A. Qadir, P. Surve, L. de Silva, N. Gilbert, and R. Huang, “Sarcasm as Contrast between a Positive Sentiment and Negative Situation,” in Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, EMNLP 2013, 18-21 October 2013, Grand Hyatt Seattle, Seattle, Washington, USA, A meeting of SIGDAT, a Special Interest Group of the ACL, 2013, pp. 704–714. [Online]. Available: https://aclanthology.org/D13-1066/

A. Joshi, V. Sharma, and P. Bhattacharyya, “Harnessing Context Incongruity for Sarcasm Detection,” in Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 2: Short Papers), 2015, pp. 757–762. doi: 10.3115/v1/P15-2124.

A. Rajadesingan, R. Zafarani, and H. Liu, “Sarcasm detection on twitter:A behavioral modeling approach,” in WSDM 2015 - Proceedings of the 8th ACM International Conference on Web Search and Data Mining, Feb. 2015, pp. 97–106. doi: 10.1145/2684822.2685316.

M. Zhang, Y. Zhang, and G. Fu, “Tweet Sarcasm Detection Using Deep Neural Network,” in COLING, 2016.

A. Ghosh and T. Veale, “Magnets for Sarcasm: Making Sarcasm Detection Timely, Contextual and Very Personal.” [Online]. Available: https://liwc.wpengine.com/

S. Amir, B. C. Wallace, H. Lyu, and P. C. M. J. Silva, “Modelling Context with User Embeddings for Sarcasm Detection in Social Media,” Jul. 2016, [Online]. Available: http://arxiv.org/abs/1607.00976

Y. Liu et al., “RoBERTa: A Robustly Optimized BERT Pretraining Approach,” Jul. 2019, [Online]. Available: http://arxiv.org/abs/1907.11692

V. Sanh, L. Debut, J. Chaumond, and T. Wolf, “DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter,” Oct. 2019, [Online]. Available: http://arxiv.org/abs/1910.01108

A. Baruah, K. Das, F. Barbhuiya, and K. Dey, “Context-Aware Sarcasm Detection Using BERT,” in Proceedings of the Second Workshop on Figurative Language Processing, 2020, pp. 83–87. doi: 10.18653/v1/2020.figlang-1.12.

K. Pant and T. Dadu, “Sarcasm Detection using Context Separators in Online Discourse,” Jun. 2020.

A. Khatri, P. P, and Dr. A. K. M, “Sarcasm Detection in Tweets with BERT and GloVe Embeddings,” Jun. 2020.

R. A. Potamias, G. Siolas, and A. G. Stafylopatis, “A transformer-based approach to irony and sarcasm detection,” Neural Comput Appl, vol. 32, no. 23, pp. 17309–17320, Dec. 2020, doi: 10.1007/s00521-020-05102-3.

A. Kumar, V. T. Narapareddy, V. A. Srikanth, A. Malapati, and L. B. M. Neti, “Sarcasm Detection Using Multi-Head Attention Based Bidirectional LSTM,” IEEE Access, vol. 8, pp. 6388–6397, 2020, doi: 10.1109/ACCESS.2019.2963630.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.