Comprehensive Empirical Study of Static Code Analysis Tools for C Language

Keywords:

C language, Common Weakness Enumeration (CWE) , Programming language, Security Vulnerability, Static Code AnalysisAbstract

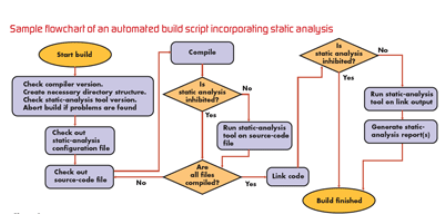

A developing trend in current science and technology is the emphasis on software codes, which places greater attention on the quality of software codes. In today's quality assurance procedure, static analysis plays a significant role. The important feature is that any fault or vulnerability in the code is discovered without the need to execute it. The key challenge is identifying complex code blocks and possible system faults. For unsafe programming languages like C and C++, various static code analyzers are used. Each of them has unique importance and constraints. To date, no technique has yet been able to guarantee that the software will not ever halt, crash, or behave bizarrely. However, more effective techniques may be chosen to reduce software coding defects. Our objective is to examine various static analysis tools to identify their uniqueness and specification. In this paper, we examine static analysis tools, their methods and determine their performance measures. Our focus is to compare various tools that assess C programs according to capabilities for detecting vulnerabilities and to identify the strengths and limitations of each tool. As an empirical study, we evaluate various performance parameters for the Juliet Test suit for C programming language.

Downloads

References

Fatima, S. Bibi, and R. Hanif, “Comparative study on static code analysis tools for C/C++,” Proc. 2018 15th Int. Bhurban Conf. Appl. Sci. Technol. IBCAST 2018, vol. 2018-Janua, pp. 465–469, 2018, doi: 10.1109/IBCAST.2018.8312265.

H. Kaur and P. Jai, “Comparing Detection Ratio of Three Static Analysis Tools,” Int. J. Comput. Appl., vol. 124, no. 13, pp. 35–40, 2015, doi: 10.5120/ijca2015905749

S. M. Alnaeli, M. Sarnowski, M. S. Aman, A. Abdelgawad, and K. Yelamarthi, “Source code vulnerabilities in IoT software systems,” Adv. Sci. Technol. Eng. Syst., vol. 2, no. 3, pp. 1502–1507, 2017, doi: 10.25046/aj0203188.

A. Wagner and J. Sametinger, “Using the Juliet Test Suite to compare static security scanners,” SECRYPT 2014 - Proc. 11th Int. Conf. Secur. Cryptogr. Part ICETE 2014 - 11th Int. Jt. Conf. E-bus. Telecommun., pp. 244–252, 2014, doi: 10.5220/0005032902440252.

I. Gomes, P. Morgado, T. Gomes, and R. Moreira, “An overview on the Static Code Analysis approach in Software Development,” Fac. Eng. da Univ. do Porto, Port., 2009.

K. Goseva-Popstojanova and A. Perhinschi, “On the capability of static code analysis to detect security vulnerabilities,” Inf. Softw. Technol., 2015, doi: 10.1016/j.infsof.2015.08.002.

M. Christakis and C. Bird, “What developers want and need from program analysis: An empirical study,” ASE 2016 - Proc. 31st IEEE/ACM Int. Conf. Autom. Softw. Eng., pp. 332–343, 2016, doi: 10.1145/2970276.297.

A. Arusoaie, S. Ciobaca, V. Craciun, D. Gavrilut, and D. Lucanu, “A comparison of open-source static analysis tools for vulnerability detection in C/C++ Code,” Proc. - 2017 19th Int. Symp. Symb. Numer. Algorithms Sci. Comput. SYNASC 2017, pp. 161–168, 2018, doi: 10.1109/SYNASC.2017.00035.

D. ucanu Andrei Arusoaie, Stefan Ciobaca, Vlad Craciun, Dragos Gavrilut, “A Comparison of Static Analysis Tools for Vulnerability Detection in C / C ++ Code,” vol. 190, pp. 161–168, 2017.

M. Mantere, I. Uusitalo, and J. Röning, “Comparison of static code analysis tools,” 2009, doi: 10.1109/SECURWARE.2009.10.

A. Kaur and R. Nayyar, “A Comparative Study of Static Code Analysis tools for Vulnerability Detection in C/C++ and JAVA Source Code,” Procedia Comput. Sci., vol. 171, no. 2019, pp. 2023–2029, 2020, doi: 10.1016/j.procs.2020.04.217.

J. Zheng, L. Williams, N. Nagappan, W. Snipes, J. P. Hudepohl, and M. A. Vouk, “On the value of static analysis for fault detection in software,” IEEE Trans. Softw. Eng., vol. 32, no. 4, pp. 240–253, 2006, doi: 10.1109/TSE.2006.38.

J. Herter, D. Kästner, C. Mallon, and R. Wilhelm, “Benchmarking static code analyzers,” Reliab. Eng. Syst. Saf., vol. 188, no. March, pp. 336–346, 2019, doi: 10.1016/j.ress.2019.03.031.

S. Shiraishi, V. Mohan, and H. Marimuthu, “Test suites for benchmarks of static analysis tools,” 2015 IEEE Int. Symp. Softw. Reliab. Eng. Work. ISSREW 2015, no. November, pp. 12–15, 2016, doi: 10.1109/ISSREW.2015.7392027.

D. Stefanović, D. Nikolić, D. Dakić, I. Spasojević, and S. Ristić, “Static code analysis tools: A systematic literature review,” Ann. DAAAM Proc. Int. DAAAM Symp., vol. 31, no. 1, pp. 565–573, 2020, doi: 10.2507/31st.daaam.proceedings.078.

J. Novak, A. Krajnc, and R. Žontar, “Taxonomy of static code analysis tools,” MIPRO 2010 - 33rd Int. Conv. Inf. Commun. Technol. Electron. Microelectron. Proc., no. March, pp. 418–422, 2010.

J. S. Delmas David, “Astrée: from research to industry,” Int. Static Anal. Symp. Springer, pp. 437–451, 2007, doi: 10.1007/978-3-540-74061-2_27.

”Clang-Static Code Analyzer.” https://clang-analyzer.llvm.org/ (accessed Nov. 22, 2020).

“CodeSonar.” https://www.grammatech.com/codesonar-cc (accessed Nov. 22, 2020).

D. Marjam¨aki, “CppCheck.” Cppcheck - A tool for static C/C++ code analysis (sourceforge.io) (accessed Oct. 22, 2020).

D. Wheeler, “FlawFinder.” Flawfinder Home Page (dwheeler.com) (accessed Oct. 22, 2020).

F. Kirchner, N. Kosmatov, V. Prevosto, J. Signoles, and B. Yakobowski, “Under consideration for publication in Formal Aspects of Computing Frama-C A Software Analysis Perspective,” 2012, [Online]. Available: https://frama-c.com/.

C. Calcagno et al., “Moving Fast with Software Verification – Facebook Research,” [Online]. Available: https://research.fb.com/publications/moving-fast-with-software-verification/

J. Viega, J. T. Bloch, Y. Kohno, and G. McGraw, “ITS4: A static vulnerability scanner for C and C++ code,” Proc. - Annu. Comput. Secur. Appl. Conf. ACSAC, pp. 257–267, 2000, doi: 10.1109/ACSAC.2000.898880.

H. Chen and D. Wagner, “Mops,” p. 235, 2002, doi: 10.1145/586110.586142.

“Parasoft.” https://www.parasoft.com/ (accessed Oct. 19, 2020).

“RATS.”https://github.com/andrew-d/rough-auditing-tool-for-security (accessed Sep. 15, 2019).

“Sparse.”https://man7.org/linux/man-pages/man1/sparse.1.html (accessed Aug. 19, 2020).

D. Evans and D. Larochelle, “Splint,” no. October 2001, 2002.

“Visual Code Greeper.” https://security.web.cern.ch/recommendations/en/codetools/vcg.shtml (accessed Aug. 08, 2018).

Downloads

Published

How to Cite

Issue

Section

License

Copyright (c) 2022 Vishruti V. Desai , Vivaksha J. Jariwala

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.