Generate Poems and Letters Using an Iterative Neural Network

(Poem Generating using LSTM)

Keywords:

RNN, poem generating, fake text, LSTMAbstract

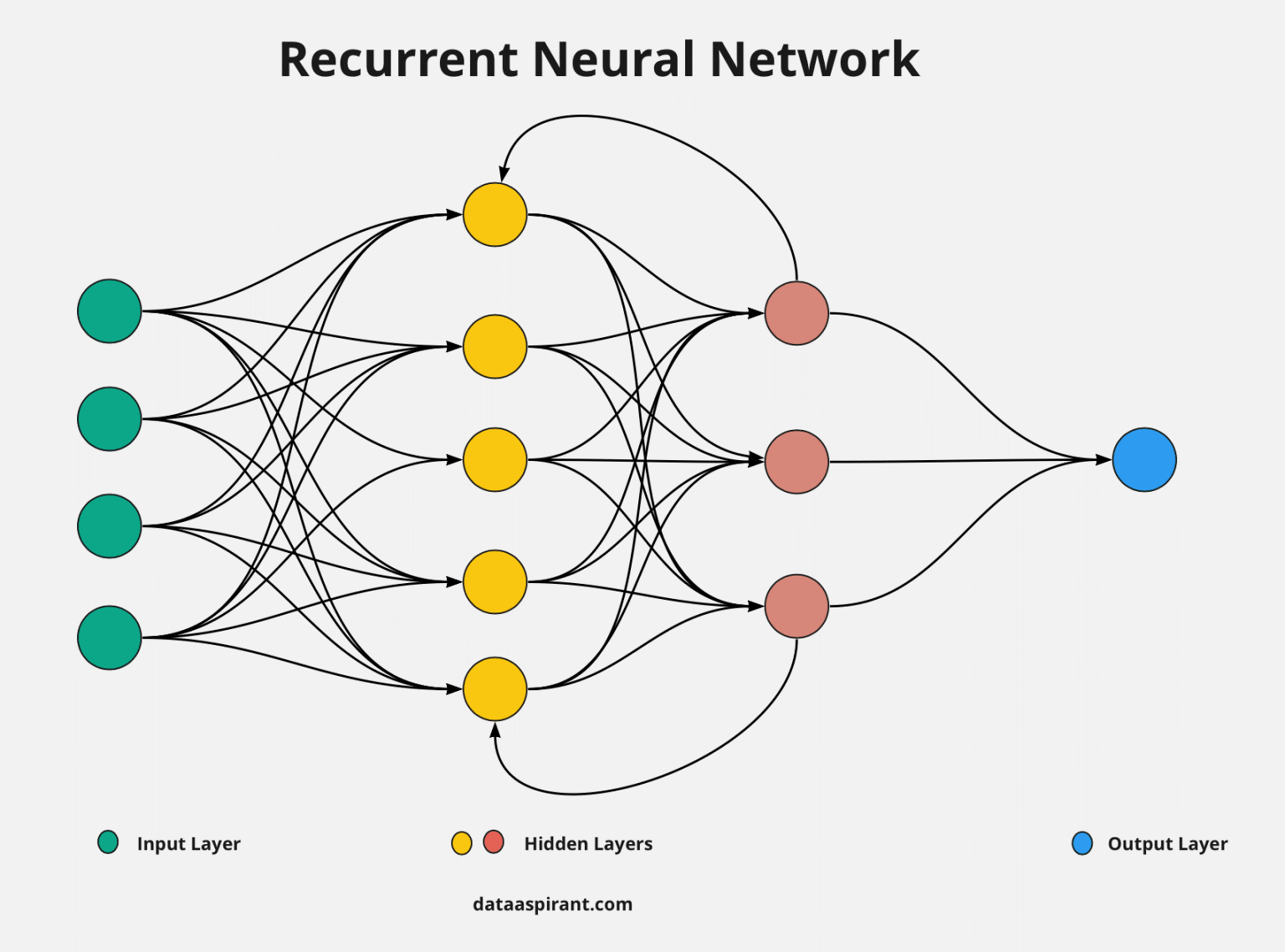

Creating a linguistic text using the famous type of recursive neural network: From the well-known section as a section of the main branches of artificial intelligence, we can see that one of the main tasks that fall under this section is to generate a real chat using deep intelligence models and because of its distinctive ability, a text will be generated very close to the level of texts written by real humans and almost The text generated using the model cannot be distinguished as an industrial text because it is very similar to the text written by humans and we see the great future benefit that this new knowledge will achieve in solving many of the various tasks facing the world today for example, translating from language to language, generating novels and poems on the level of art, writing a summary of a long text using intelligence models, enabling the robot to write and generating sounds later. All these tasks will be solved with the help of models of deep intelligence. A modern method will be presented in generating poetry writing using deep model and identification, an iterative neural network that learns through the sequence and remembers it for a short period of time and then generates a similar sequence of letters that will form sentences bearing a poetic meaning.

Downloads

References

Jia Wei; Quiang Zhou; YiciCai; Poet-based Poetry Generation: Controlling Personal Style with Recurrent Neural Networks; International Conference on Computing, Networking and Communications (ICNC); 2018.

Zejian Shi; Minyong Shi; Chunfang Li; The prediction of character based on recurrent neural network language model; IEEE/ACIS 16th International Conference on Computer and Information Science (ICIS); 2017

Kyuyeon Hwang; Wonyong Sung; Character-level language modeling with hierarchical recurrent neural networks; IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP); 2017

Tzu-Hsuan Tseng; Tzu-Hsuan Yang; Chia-Ping Chen; Verifying the long-range dependency of RNN language models; International Conference on Asian Language Processing (IALP); 2016

CONTEXT BASED TEXT-GENERATION USING LSTM NETWORKS 2020

omas Mikolov, Edouard Grave, Piotr Bojanowski, Christian Puhrsch, and Armand Joulin. Advances in pretraining distributed word representations. In Proceedings of the International Conference on Language Resources and Evaluation (LREC 2018), 2018.

Generating Steganographic Text with LSTMs 2017

Rafal Jozefowicz, Oriol Vinyals, Mike Schuster, Noam Shazeer, and Yonghui Wu. 2016. Exploring the limits of language modeling. arXiv preprint arXiv:1602.02410 .

Denis Volkhonskiy, Ivan Nazarov, Boris Borisenko, and Evgeny Burnaev. 2017. Steganographic generative adversarial networks. arXiv preprint arXiv:1703.05502 .

Alex Wilson and Andrew D Ker. 2016. Avoiding detection on twitter: embedding strategies for linguistic steganography. Electronic Imaging 2016(8):1–9.

Park, Y.H., Jeong, H.J., Kang, I.M., Park, C.Y., Choi, Y.S., Lee, K.J.: Automatic generation of korean poetry using sequence generative adversarial networks. In: Proceedings of the 2018 Annual Conference on Human and Language Technology, Human and Language Technology. pp. 580–583 (Oct 2018)

Wang, K., Tian, J., Gao, R., Yao, C.: The machine poetry generator imitating du fu's styles. In: Proceedings of the 2018 International Conference on Artificial Intelligence and Big Data (ICAIBD 2018). pp. 261–265. IEEE, Chengdu, China (May 2018). https://doi.org/10.1109/icaibd.2018.8396206

Zugarini, A., Melacci, S., Maggini, M.: Neural poetry: Learning to generate poems using syllables. In: Tetko, I.V., Kurková, V., Karpov, P., Theis, F.J. (eds.) Artificial Neural Networks and Machine Learning – ICANN 2019: Text and Time Series – Proceedings of the 28th International Conference on Artificial Neural Networks. Lecture Notes in Computer Science, vol. 11730, pp. 313–325. Springer International Publishing, Munich, Germany (Sep 2019). https://doi.org/10.1007/978-3-030-30490-4_2

R. M. P. J. Sanidhya Mangal, "gru_lstm_tv_script," GitHub, [Online]. Available: https://github.com/sanidhyamangal/gru_lstm_tv_script. [Accessed June 2019].

R. M. P. J. Sanidhya Mangal, "generated_text," GitHub, [Online]. Available: https://github.com/sanidhyamangal/gru_lstm_tv_script/tree/master/ge nerated_text. [Accessed July 2019].

S. Koirala, "Game of Thrones Data," GitHub, [Online]. Available: https://github.com/shekharkoirala/Game_of_Thrones/tree/master/Data . [Accessed June 2019].

S. Mangal, "Got.pkl," GitHub, June 2019. [Online]. Available: https://github.com/sanidhyamangal/gru_lstm_tv_script/blob/master/go t.pkl. [Accessed June 2019].

pickle — Python object serialization," Python Software Foundation, [Online]. Available: https://docs.python.org/3/library/pickle.html. [Accessed June 2019]

"Magenta," Google, [Online]. Available: https://magenta.tensorflow.org/. [Accessed 29 October 2018]

G. Huang, Z. Liu, L. Van Der Maaten, and K. Q. Weinberger. Densely connected convolutional networks. In Pro ngs of the IEEE conference on computer vision and pattern recognition, pages 4700–4708, 2017

A. Daud, W. Khan, and D. Che. Urdu language processing: a survey. Artificial Intelligence Review, 47(3):279–311, 2017

Briot, Jean-Pierre, Gaëtan Hadjeres, and François Pachet. "Deep Learning Techniques for Music Generation-A Survey." arXiv preprint arXiv:1709.01620 (2017)

]Johnson, Daniel D. "Generating Polyphonic Music Using Tied Parallel Networks." In International Conference on Evolutionary and Biologically Inspired Music and Art, pp. 128-143. Springer, Cham, 2017.

Mariya I Vasileva, Bryan A Plummer, Krishna Dusad, Shreya Rajpal, Ranjitha Kumar, and David Forsyth. 2018. Learning Type-Aware Embeddings for Fashion Compatibility. arXiv preprint arXiv:1803.09196 (2018).

Lvona Tautkute, Tomasz Trzcinski, Aleksander Skorupa, Lukasz Brocki, and Krzysztof Marasek. 2018. DeepStyle: Multimodal Search Engine for Fashion and Interior Design. arXiv preprint arXiv:1801.03002 (2018).

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.