An Augmented Reality framework for Distributed Graphical Simultaneous Localization and Mapping (SLAM)

Keywords:

SLAM, intersections, mediator, representatives, mitigation, guesstimating, solicitationsAbstract

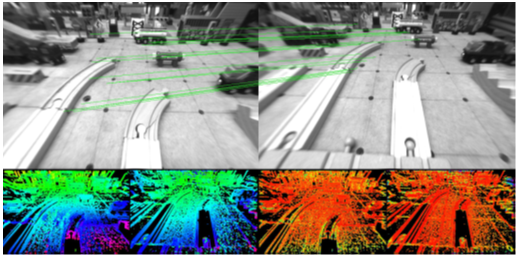

Graphic SLAM (Simultaneous Localization and Mapping) have used for markerless following in augmented reality based solicitations. Disseminated SLAM assistances numerous representatives toward collaboratively discover plus construct a worldwide chart of the surroundings though guesstimating their positions in the situation. Individual of the foremost contests in Disseminated SLAM is to recognize native diagram intersections of these representatives, particularly the minute their preliminary qualified situations are not acknowledged. To overcome this mitigation developing a combined AR structure through spontaneously stirring representatives consuming no awareness of their early virtual locations. Every single mediator in this proposed agenda customs a camera by means of the single participation method used for its SLAM progression. Additionally, the outline recognizes record intersections of representatives via an appearance-based technique.

Downloads

References

Engel, T. Schps, and D. Cremers, “Lsd-slam: Large-scale direct monocular slam,” in Computer Vision ECCV 2014, ser. Lecture Notes in Computer Science. Springer International Publishing, 2014, vol. 8690, pp. 834–849. [Online]. Available: http://dx.doi.org/10.1007/978-3-319-10605-2 54

R. Smith, M. Self, and P. Cheeseman, “Estimating uncertain spatial relationships in robotics,” in Autonomous Robot Vehicles, I. Cox and G. Wilfong, Eds. Springer New York, 1990, pp. 167–193. [Online]. Available: http://dx.doi.org/10.1007/978-1-4613-8997-2 14

M. Montemerlo, S. Thrun, D. Koller, and B. Wegbreit, “Fastslam: A factored solution to the simultaneous localization and mapping problem,” in In Proceedings of the AAAI National Conference on Artificial Intelligence. AAAI, 2002, pp. 593–598.

A. Davison, I. Reid, N. Molton, and O. Stasse, “Monoslam: Real-time single camera slam,” Pattern Analysis and Machine Intelligence, IEEE Transactions on, vol. 29, no. 6, pp. 1052–1067, June 2007.

G. Klein and D. Murray, “Parallel tracking and mapping for smaller workspaces,” in Mixed and Augmented Reality, 2007. ISMAR 2007. 6th IEEE and ACM International Symposium on, Nov 2007, pp. 225–234.

M. A. Fischler and R. C. Bolles, “Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography,” Commun. ACM, vol. 24, no. 6, pp. 381–395, Jun. 1981. [Online]. Available: http://doi.acm.org/10.1145/358669.358692

R. I. Hartley and A. Zisserman, Multiple View Geometry in Computer Vision, 2nd ed. Cambridge University Press, ISBN: 0521540518, 2004.

B. Triggs, P. F. McLauchlan, R. I. Hartley, and A. W. Fitzgibbon, “Bundle adjustmenta modern synthesis,” in Vision algorithms: theory and practice. Springer, 2000, pp. 298–372.

H. Strasdat, J. Montiel, and A. Davison, “Real-time monocular slam: Why filter?” in Robotics and Automation (ICRA), 2010 IEEE InternationalConference on, May 2010, pp. 2657–2664.

R. A. Newcombe, S. Lovegrove, and A. Davison, “Dtam: Dense tracking and mapping in real-time,” in Computer Vision (ICCV), 2011 IEEE International Conference on, Nov 2011, pp. 2320–2327.

E. Nettleton, S. Thrun, H. Durrant-Whyte, and S. Sukkarieh, “Decentralised slam with low-bandwidth communication for teams of vehicles,” in Field and Service Robotics. Springer, 2006, pp. 179–188.

L. Paull, G. Huang, M. Seto, and J. Leonard, “Communication constrained multi-auv cooperative slam,” in Robotics and Automation (ICRA), 2015 IEEE International Conference on, May 2015, pp. 509–516.

A. Howard, L. Parker, and G. Sukhatme, “The sdr experience: Experiments with a large-scale heterogeneous mobile robot team,” in Experimental Robotics IX, ser. Springer Tracts in Advanced

Robotics, J. Ang, MarceloH. and O. Khatib, Eds. Springer Berlin Heidelberg, 2006, vol. 21, pp. 121–130. [Online]. Available: http://dx.doi.org/10.1007/11552246 12

D. Fox, J. Ko, K. Konolige, B. Limketkai, D. Schulz, and B. Stewart, “Distributed multirobot exploration and mapping,” Proceedings of the IEEE, vol. 94, no. 7, pp. 1325–1339, July 2006.

R. Gamage and M. Tuceryan, “An experimental distributed framework for distributed simultaneous localization and mapping,” in 2016 IEEE International Conference on Electro Information Technology (EIT), May 2016, pp. 0665–0667.

M. Quigley, K. Conley, B. Gerkey, J. Faust, T. Foote, J. Leibs, R. Wheeler, and A. Y. Ng, “Ros: an open-source robot operating system,” in ICRA workshop on open source software, vol. 3, no. 3.2, 2009, p. 5.

J. Engel, J. Sturm, and D. Cremers, “Semi-dense visual odometry for a monocular camera,” in Computer Vision (ICCV), 2013 IEEE International Conference on, Dec 2013, pp. 1449–1456.

H. Bay, A. Ess, T. Tuytelaars, and L. Van Gool, “Speededup robust features (surf),” Comput. Vis. Image Underst., vol. 110, no. 3, pp. 346–359, Jun. 2008. [Online]. Available: http://dx.doi.org/10.1016/j.cviu.2007.09.014.

D. G. Lowe, “Distinctive image features from scale-invariant keypoints,”Int. J. Comput. Vision, vol. 60, no. 2, pp. 91–110, Nov. 2004. [Online]. Available: http://dx.doi.org/10.1023/B:VISI.0000029664.99615.94

M. Muja and D. G. Lowe, “Fast approximate nearest neighbors with automatic algorithm configuration,” in International Conference on Computer Vision Theory and Application VISSAPP’09). INSTICC Press, 2009, pp. 331–340.

O. Sorkine-Hornung and M. Rabinovich, “Least-squares rigid motion using svd,” 2017, available at https://igl.ethz.ch/projects/ARAP/svd rot. pdf. [Online]. Available:https://igl.ethz.ch/projects/ARAP/svd rot.pdf

M. A. Fischler and R. C. Bolles, “Random sample consensus: A paradigm for model fitting with applications to image analysis and automated cartography,” Commun. ACM, vol. 24, no. 6, pp. 381–395, Jun. 1981. [Online]. Available: http://doi.acm.org/10.1145/358669.358692

M. Burri, J. Nikolic, P. Gohl, T. Schneider, J. Rehder, S. Omari, M. W. Achtelik, and R. Siegwart, “The euroc micro aerial vehicle datasets,” The International Journal of Robotics Research, 2016. [Online]. Available: http://ijr.sagepub.com/content/early/2016/01/21/0278364915620033.abstract

A. Geiger, P. Lenz, and R. Urtasun, “Are we ready for autonomous driving? the kitti vision benchmark suite,” in Conference on Computer Vision and Pattern Recognition (CVPR), 2012.

J. Engel, V. Usenko, and D. Cremers, “A photometrically calibrated benchmark for monocular visual odometry,” in arXiv:1607.02555, July2016.

J. Sturm, N. Engelhard, F. Endres, W. Burgard, and D. Cremers, “A benchmark for the evaluation of rgb-d slam systems,” in Proc. of the International Conference on Intelligent Robot Systems (IROS), Oct.2012.

D. G´alvez-L´opez and J. D. Tard´os, “Bags of binary words for fast place recognition in image sequences,” IEEE Transactions on Robotics, vol. 28, no. 5, pp. 1188–1197, October 2012.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.