Performance Comparison between VGG16 and Inception V3 for Organic Waste and Recyclable Waste Classification

Keywords:

Computer Vision, VGG16, Inception V3, waste classificationAbstract

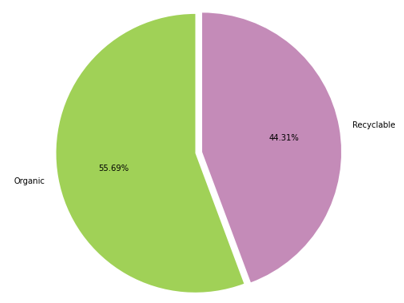

Computer vision is used for learning image recognition, where a CNN algorithm is used to carry out the learning of the image itself. In this paper, a comparison is made between the two algorithms to determine which is better by comparing VGG16 and Inception V3 using a dataset that distinguishes the types of organic waste and recyclable waste. This study proposes algorithms using VGG-16 and Inception V3 that use a semi-supervised learning system to train algorithms from different images. By training the two algorithms, it can be seen that VGG16 and Inception V3 have quite good accuracy, but for the dataset used, it is better to use the VGG16 algorithm because Inception V3 has a fairly complicated algorithm model that makes the performance of the algorithm with the dataset not optimal. Therefore, further research is needed to optimize the two models to train the dataset. This case is taken because waste management is still a problem for our environment.

Downloads

References

H. N. Jong, “Indonesia in state of waste energy”, The Jakarta Post October 9, 2015.[Online], Available:https://www.thejakartapost.com/news/2015/10/09/indonesia-state-waste-emergency.html.

C. S. Burke, E. Salas, K. Smith-Jentsch, and M. A. Rosen, “A Global Review of Solid Waste Management,” A Glob. Rev. Solid Waste Manag., pp. 29–43, 2012, doi: 10.1201/9781315593173-4.

O. Adedeji and Z. Wang, “Intelligent waste classification system using deep learning convolutional neural network,” Procedia Manuf., vol. 35, pp. 607–612, 2019, doi: 10.1016/j.promfg.2019.05.086.

H. Wang, Y. Li, L. M. Dang, J. Ko, D. Han, and H. Moon, “Smartphone-based bulky waste classification using convolutional neural networks,” Multimed. Tools Appl., vol. 79, no. 39–40, pp. 29411–29431, 2020, doi: 10.1007/s11042-020-09571-5.

Y. Wang, H. Zhang, and G. Zhang, “cPSO-CNN: An efficient PSO-based algorithm for fine-tuning hyper-parameters of convolutional neural networks,” Swarm Evol. Comput., vol. 49, pp. 114–123, 2019, doi: 10.1016/j.swevo.2019.06.002.

I. Y. Kim and O. L. De Weck, “Variable chromosome length genetic algorithm for progressive refinement in topology optimization,” Struct. Multidiscip. Optim., vol. 29, no. 6, pp. 445–456, 2005, doi: 10.1007/s00158-004-0498-5.

Szeliski, R. (2022). Computer vision: algorithms and applications. Springer Nature.

Voulodimos, A., Doulamis, N., Doulamis, A., & Protopapadakis, E. (2018). Deep learning for computer vision: A brief review. Computational intelligence and neuroscience, 2018.

Mathew, A., Amudha, P., & Sivakumari, S. (2021). Deep learning techniques: an overview. In International conference on advanced machine learning technologies and applications (pp. 599-608). Springer, Singapore.

Guo, Y., Liu, Y., Oerlemans, A., Lao, S., Wu, S., & Lew, M. S. (2016). Deep learning for visual understanding: A review. Neurocomputing, 187, 27-48.

Janiesch, C., Zschech, P., & Heinrich, K. (2021). Machine learning and deep learning. Electronic Markets, 31(3), 685-695.

H. L. Jason Yosinski, Jeff Clune, Yoshua Bengio, “How transferable are features in deep neural networks?,” Proc. Int. Jt. Conf. Neural Networks, vol. 2016-Octob, pp. 2560–2567, 2016, doi: 10.1109/IJCNN.2016.7727519. Available: http://dl.z-thz.com/eBook/zomega_ebook_pdf_1206_sr.pdf. Accessed on: May 19, 2014.

Li, Z., Liu, F., Yang, W., Peng, S., & Zhou, J. (2021). A survey of convolutional neural networks: analysis, applications, and prospects. IEEE transactions on neural networks and learning systems.

Kattenborn, T., Leitloff, J., Schiefer, F., & Hinz, S. (2021). Review on Convolutional Neural Networks (CNN) in vegetation remote sensing. ISPRS journal of photogrammetry and remote sensing, 173, 24-49.

Bhatt, D., Patel, C., Talsania, H., Patel, J., Vaghela, R., Pandya, S., ... & Ghayvat, H. (2021). CNN variants for computer vision: history, architecture, application, challenges and future scope. Electronics, 10(20), 2470.

Wang, S. Y., Wang, O., Zhang, R., Owens, A., & Efros, A. A. (2020). CNN-generated images are surprisingly easy to spot... for now. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 8695-8704).

Simonyan, K., & Zisserman, A. (2014). Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556.

Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., & Wojna, Z. (2016). Rethinking the inception architecture for computer vision. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2818-2826).

Qassim, H., Verma, A., & Feinzimer, D. (2018, January). Compressed residual-VGG16 CNN model for big data places image recognition. In 2018 IEEE 8th annual computing and communication workshop and conference (CCWC) (pp. 169-175). IEEE.

Yang, H., Ni, J., Gao, J., Han, Z., & Luan, T. (2021). A novel method for peanut variety identification and classification by Improved VGG16. Scientific Reports, 11(1), 1-17..

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.