Identification of Empty Land Based on Google Earth Using Convolutional Neural Network Algorithm

Keywords:

CNN, Google Earth, Vacant Land ClassificationAbstract

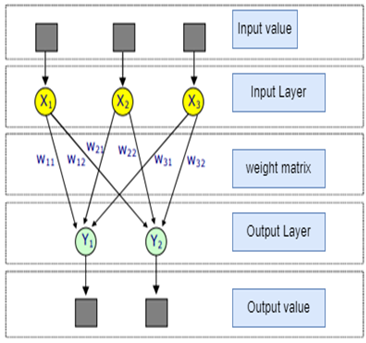

The development of digital image technology has experienced rapid development, both in terms of the development of models and algorithms used as well as the quality and results of the management process carried out. Utilization of digital image management can be used in classifying the condition of vacant land in certain areas. A high level of urbanization causes an increase in population growth and uneven development in certain areas. Advanced technology has resulted in a vast constellation of satellites and aerial platforms. In general, many remote sensing images with an excellent spatial resolution (VFSR) are commercially available to the general public, like google earth. This platform provides much information regarding spatial conditions. So, data available on the platform allows it to be used as a medium for analyzing and classifying the availability of vacant land in certain areas. To support good regional and city planning and overcome problems due to high levels of urbanization, a model that can automatically classify vacant land in certain areas is needed using data that is openly available on Google Earth. Thus, this study experimented by classifying vacant land based on images from google earth using the Deep Learning model, namely Convolutional Neural Network (CNN). The CNN method is used because of its superiority in classifying images. The experiment results have an optimal for image classification using the CNN algorithm.

Downloads

References

B. Zhao, Y. Zhong, and L. Zhang, “A spectral–structural bag-of-features scene classifier for very high spatial resolution remote sensing imagery,” ISPRS J. Photogramm. Remote Sens., vol. 116, pp. 73–85, 2016, doi: https://doi.org/10.1016/j.isprsjprs.2016.03.004.

J. Stürck, C. J. E. Schulp, and P. H. Verburg, “Spatio-temporal dynamics of regulating ecosystem services in Europe – The role of past and future land use change,” Appl. Geogr., vol. 63, pp. 121–135, 2015, doi: https://doi.org/10.1016/j.apgeog.2015.06.009.

C. Zhang et al., “Joint Deep Learning for land cover and land use classification,” Remote Sens. Environ., vol. 221, pp. 173–187, 2019, doi: https://doi.org/10.1016/j.rse.2018.11.014.

F. Melgani and S. B. Serpico, “A statistical approach to the fusion of spectral and spatio-temporal contextual information for the classification of remote-sensing images,” Pattern Recognit. Lett., vol. 23, no. 9, pp. 1053–1061, 2002, doi: https://doi.org/10.1016/S0167-8655(02)00052-1.

C. Zhang, P. A. Harrison, X. Pan, H. Li, I. Sargent, and P. M. Atkinson, “Scale Sequence Joint Deep Learning (SS-JDL) for land use and land cover classification,” Remote Sens. Environ., vol. 237, no. September 2019, p. 111593, 2020, doi: 10.1016/j.rse.2019.111593.

D. Ming, J. Li, J. Wang, and M. Zhang, “Scale parameter selection by spatial statistics for GeOBIA: Using mean-shift based multi-scale segmentation as an example,” ISPRS J. Photogramm. Remote Sens., vol. 106, pp. 28–41, 2015, doi: https://doi.org/10.1016/j.isprsjprs.2015.04.010.

Y. Yao et al., “Classifying land-use patterns by integrating time-series electricity data and high-spatial resolution remote sensing imagery,” Int. J. Appl. Earth Obs. Geoinf., vol. 106, p. 102664, 2022, doi: https://doi.org/10.1016/j.jag.2021.102664.

J. Jin et al., “Heterogeneity of land cover data with discrete classes obscured remotely-sensed detection of sensitivity of forest photosynthesis to climate,” Int. J. Appl. Earth Obs. Geoinf., vol. 104, p. 102567, 2021, doi: https://doi.org/10.1016/j.jag.2021.102567.

X. Cheng et al., “Enhanced contextual representation with deep neural networks for land cover classification based on remote sensing images,” Int. J. Appl. Earth Obs. Geoinf., vol. 107, p. 102706, 2022, doi: https://doi.org/10.1016/j.jag.2022.102706.

M. Kim, T. A. Warner, M. Madden, and D. S. Atkinson, “Multi-scale GEOBIA with very high spatial resolution digital aerial imagery: scale, texture and image objects,” Int. J. Remote Sens., vol. 32, no. 10, pp. 2825–2850, May 2011, doi: 10.1080/01431161003745608.

Y. Wang, B. Xiao, A. Bouferguene, M. Al-Hussein, and H. Li, “Vision-based method for semantic information extraction in construction by integrating deep learning object detection and image captioning,” Adv. Eng. Informatics, vol. 53, p. 101699, 2022, doi: https://doi.org/10.1016/j.aei.2022.101699.

E. Paul and S. R.S., “Modified convolutional neural network with pseudo-CNN for removing nonlinear noise in digital images,” Displays, vol. 74, p. 102258, 2022, doi: https://doi.org/10.1016/j.displa.2022.102258.

G. Cheng, Y. Wang, S. Xu, H. Wang, S. Xiang, and C. Pan, “Automatic Road Detection and Centerline Extraction via Cascaded End-to-End Convolutional Neural Network,” IEEE Trans. Geosci. Remote Sens., vol. 55, no. 6, pp. 3322–3337, 2017, doi: 10.1109/TGRS.2017.2669341.

Y. Dong, L. Zhang, L. Zhang, and B. Du, “Maximum margin metric learning based target detection for hyperspectral images,” ISPRS J. Photogramm. Remote Sens., vol. 108, pp. 138–150, 2015, doi: https://doi.org/10.1016/j.isprsjprs.2015.07.003.

X. Liu et al., “Classifying urban land use by integrating remote sensing and social media data,” Int. J. Geogr. Inf. Sci., vol. 31, no. 8, pp. 1675–1696, Aug. 2017, doi: 10.1080/13658816.2017.1324976.

H. Wang, Y. Wang, Q. Zhang, S. Xiang, and C. Pan, “Gated Convolutional Neural Network for Semantic Segmentation in High-Resolution Images,” Remote Sensing , vol. 9, no. 5. 2017. doi: 10.3390/rs9050446.

C. Zhang et al., “A hybrid MLP-CNN classifier for very fine resolution remotely sensed image classification,” ISPRS J. Photogramm. Remote Sens., vol. 140, pp. 133–144, 2018, doi: https://doi.org/10.1016/j.isprsjprs.2017.07.014.

X. Pan and J. Zhao, “High-Resolution Remote Sensing Image Classification Method Based on Convolutional Neural Network and Restricted Conditional Random Field,” Remote Sensing , vol. 10, no. 6. 2018. doi: 10.3390/rs10060920.

Z. Yang, X. Mu, and F. Zhao, “Scene classification of remote sensing image based on deep network and multi-scale features fusion,” Optik (Stuttg)., vol. 171, pp. 287–293, 2018, doi: https://doi.org/10.1016/j.ijleo.2018.06.024.

Z. Deng, H. Sun, S. Zhou, J. Zhao, L. Lei, and H. Zou, “Multi-scale object detection in remote sensing imagery with convolutional neural networks,” ISPRS J. Photogramm. Remote Sens., vol. 145, pp. 3–22, 2018, doi: https://doi.org/10.1016/j.isprsjprs.2018.04.003.

Q. Li, L. Mou, Q. Liu, Y. Wang, and X. X. Zhu, “HSF-Net: Multiscale Deep Feature Embedding for Ship Detection in Optical Remote Sensing Imagery,” IEEE Trans. Geosci. Remote Sens., vol. 56, no. 12, pp. 7147–7161, 2018, doi: 10.1109/TGRS.2018.2848901.

X. Lv, D. Ming, T. Lu, K. Zhou, M. Wang, and H. Bao, “A New Method for Region-Based Majority Voting CNNs for Very High Resolution Image Classification,” Remote Sensing , vol. 10, no. 12. 2018. doi: 10.3390/rs10121946.

C. Zhang, I. Sargent, X. Pan, A. Gardiner, J. Hare, and P. M. Atkinson, “VPRS-Based Regional Decision Fusion of CNN and MRF Classifications for Very Fine Resolution Remotely Sensed Images,” IEEE Trans. Geosci. Remote Sens., vol. 56, no. 8, pp. 4507–4521, 2018, doi: 10.1109/TGRS.2018.2822783.

N. He et al., “Feature Extraction With Multiscale Covariance Maps for Hyperspectral Image Classification,” IEEE Trans. Geosci. Remote Sens., vol. 57, no. 2, pp. 755–769, 2019, doi: 10.1109/TGRS.2018.2860464.

Downloads

Published

How to Cite

Issue

Section

License

This work is licensed under a Creative Commons Attribution-ShareAlike 4.0 International License.

All papers should be submitted electronically. All submitted manuscripts must be original work that is not under submission at another journal or under consideration for publication in another form, such as a monograph or chapter of a book. Authors of submitted papers are obligated not to submit their paper for publication elsewhere until an editorial decision is rendered on their submission. Further, authors of accepted papers are prohibited from publishing the results in other publications that appear before the paper is published in the Journal unless they receive approval for doing so from the Editor-In-Chief.

IJISAE open access articles are licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. This license lets the audience to give appropriate credit, provide a link to the license, and indicate if changes were made and if they remix, transform, or build upon the material, they must distribute contributions under the same license as the original.